Blogs

Cut costs, not corners: How AWS Landing Zone simplifies cloud spend for SMBs

Think of AWS Landing Zone as the “move-in ready house” in the cloud. The “walls, plumbing, and electricity” (security, networking, IAM) are already set up. The users just bring in the “furniture and appliances” (apps, data, workloads). It cut costs for SMBs by eliminating the trial-and-error of building a cloud foundation from scratch.

Instead of spending on custom setups, rework, or security fixes later, SMBs get a pre-configured, best-practice environment that reduces wasted engineering hours, avoids compliance penalties, and scales efficiently. This means SMBs pay only for what they use while accelerating time-to-value.

This article explores why AWS Landing Zones are essential for SMBs looking to establish a cost-efficient cloud foundation.

Key takeaways:

- Secure foundation first: A well-architected AWS Landing Zone ensures multi-account governance, compliance, and identity controls from day one.

- Scalable and resilient: Proper account structure, network segmentation, and automation enable workloads to scale reliably as the business grows.

- Compliance baked in: Continuous monitoring, logging, and guardrails help SMBs meet industry regulations like HIPAA, GDPR, and PCI DSS.

- Operational efficiency: Automation with IaC, CI/CD, and Control Tower reduces manual effort, minimizes errors, and accelerates deployment.

- SMB-first expertise matters: Partnering with Cloudtech helps SMBs adopt AWS Landing Zones quickly, avoid costly mistakes, and focus on innovation and growth.

Should SMBs opt for AWS Landing Zones?

Setting up cloud environments without structure often leads to what AWS calls “cloud sprawl”, with fragmented accounts, inconsistent security controls, and rising costs that are hard to trace. Many SMBs start by spinning up workloads directly, but this DIY approach quickly introduces technical challenges.

For example, managing multiple accounts without a consistent identity and access strategy leads to weak security boundaries, inconsistent tagging makes cost allocation nearly impossible, and lack of centralized logging leaves blind spots for compliance audits. Over time, this increases both operational overhead and risk exposure.

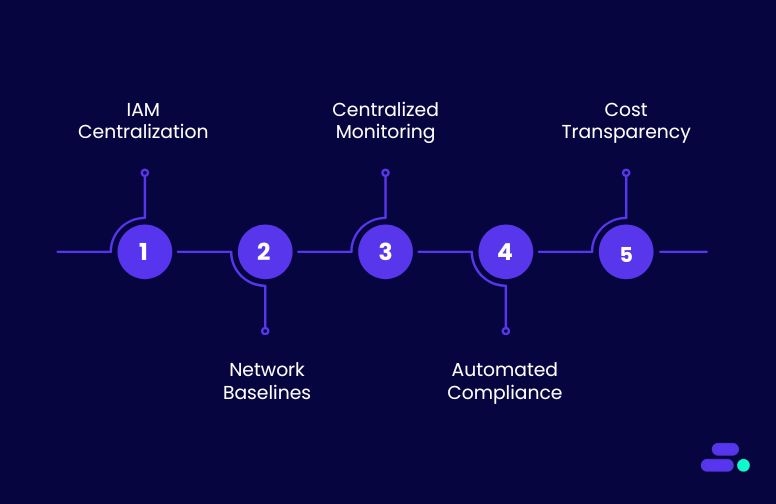

AWS Landing Zones solve these challenges by automating the setup of a multi-account, governed, and secure AWS environment from the start. They embed best practices such as:

- Centralized identity and access management (IAM): Enforces least-privilege policies across accounts and users, avoiding ad-hoc permissions.

- Network baselines: Preconfigured VPCs, subnets, and routing policies prevent common misconfigurations and enable secure communication between workloads.

- Centralized monitoring and logging: AWS CloudTrail and CloudWatch are enabled across all accounts, ensuring every activity is tracked and auditable.

- Automated compliance controls: AWS Config continuously checks for policies like encryption, region restrictions, and resource tagging, keeping environments aligned with regulations.

- Cost transparency: Standardized tagging and account structures make it easier to attribute costs by workload, project, or team, helping SMBs avoid bill shock.

For SMBs, opting for AWS Landing Zones means skipping the painful trial-and-error of building governance manually. Instead, they gain a secure, compliant, and scalable foundation where teams can innovate freely without breaking guardrails.

This balance of freedom with control makes Landing Zones not just a convenience, but a long-term enabler of sustainable cloud growth.

How can SMBs set up an AWS Landing Zone? Simple steps

SMBs usually opt for an AWS Landing Zone to avoid the pitfalls of piecemeal alternatives like building custom governance scripts or relying on ad-hoc account setups that rarely scale. While tools such as AWS Control Tower or manual configurations offer starting points, they often lack the consistency, automation, and multi-account governance needed as workloads grow.

An AWS Landing Zone, on the other hand, provides a pre-engineered blueprint that balances speed of setup with enterprise-grade controls, letting SMBs move faster without sacrificing security or compliance. It’s about choosing a framework that grows with the business, ensures costs and risks are transparent, and avoids costly re-engineering down the line.

SMBs can establish an AWS Landing Zone in several simple steps:

Step 1: Define business & compliance needs

This step is about setting the guardrails before building anything in AWS. It ensures that the landing zone reflects both business goals and compliance obligations such as HIPAA, GDPR, or PCI DSS.

Starting here prevents costly redesigns later, because compliance rules dictate which AWS regions can be used, how data must be encrypted, and what level of monitoring and evidence collection is required. Essentially, this step translates business and regulatory needs into concrete AWS governance policies.

How to perform this step using AWS:

- Define business outcomes such as cost optimization, scalability, or compliance-readiness, and link them to AWS Well-Architected best practices.

- Map industry regulations (HIPAA, GDPR, PCI DSS) to AWS controls using AWS Audit Manager, AWS Security Hub standards, and predefined AWS Conformance Packs.

- Establish data classification and residency rules, applying Amazon S3 encryption, AWS KMS CMKs, and AWS Service Control Policies (SCPs) to restrict unapproved regions.

- Set up identity governance through AWS IAM Identity Center for SSO, enforce MFA, and create break-glass access policies for emergencies.

- Determine resilience, logging, and retention requirements using AWS multi-AZ designs, AWS Backup vaults, and immutable AWS CloudTrail logs with long-term Amazon S3 storage.

Use case: A healthcare SMB migrating its electronic health record system begins by identifying HIPAA as the primary regulatory driver. Business goals include enabling secure telemedicine, reducing on-premise costs by 30%, and ensuring 24/7 availability of patient portals.

To meet these needs, the SMB mandates end-to-end encryption using AWS KMS, limits PHI storage to U.S. regions through SCPs, and enforces MFA for all staff handling patient data. Logging is centralized with AWS CloudTrail and retained for seven years, while resilience is ensured through multi-AZ deployments and automated backups.

These decisions produce a compliance blueprint that directly guides account setup, IAM design, and network architecture in later steps.

Step 2: Set up core accounts and organization structure

Once business and compliance requirements are clear, the next step is to establish the AWS foundation using a multi-account strategy. Instead of running everything in a single account, AWS recommends separating workloads and responsibilities across accounts for stronger security, cost visibility, and compliance alignment.

This is managed through AWS Organizations for account hierarchy and policies, and AWS Control Tower to automate account creation with guardrails. A well-designed account structure ensures that governance, security, and operational needs are consistently enforced from day one.

How to perform this step using AWS:

- Use AWS Organizations to create a multi-account hierarchy, grouping accounts by workload or environment (e.g., production, non-production, sandbox).

- Deploy AWS Control Tower to automate account provisioning and apply preventive and detective guardrails.

- Establish core accounts such as AWS Management (payer), AWS Security, AWS Log Archive, and AWS Shared Services following AWS best practices.

- Apply AWS Service Control Policies (SCPs) at the AWS Organizational Unit (OU) level to restrict unapproved regions, enforce MFA, or limit high-risk actions.

- Configure AWS Consolidated Billing and AWS tagging standards to enable accurate cost allocation across accounts and business units.

Use case: A healthcare SMB moving to AWS uses Control Tower to establish a secure multi-account structure. It creates dedicated accounts for Security (running centralized logging and GuardDuty), Log Archive (storing immutable CloudTrail logs), Shared Services (for networking and monitoring tools), and separate accounts for development and production workloads.

SCPs are applied to block the use of regions outside the U.S., and budgets are set up at the OU level for cost tracking. This structure not only enforces HIPAA compliance but also provides clear operational separation, making audits and incident response much easier.

Step 3: Establish IAM

With the account structure in place, the next step is to standardize how users and applications authenticate and gain access to AWS resources. AWS emphasizes centralized identity and access management to reduce risk, prevent privilege sprawl, and simplify audits.

Using IAM Identity Center (AWS SSO) with an external identity provider ensures a single source of truth for users, while fine-grained roles and policies enforce least privilege. This step also includes defining break-glass access procedures, MFA enforcement, and a strategy for managing service accounts and workloads.

A strong IAM foundation is critical because nearly every compliance framework requires strict identity governance.

How to perform this step using AWS:

- Integrate AWS IAM Identity Center with the corporate identity provider (e.g., Okta, Microsoft Entra ID, Ping Identity) for centralized authentication.

- Create AWS IAM permission sets aligned to roles (e.g., Developer, Auditor, Security Admin) and enforce least privilege.

- Enforce AWS multi-factor authentication (MFA) for all human access, and define break-glass root account procedures with AWS hardware MFA.

- Use AWS IAM roles for applications and cross-account access, avoiding long-lived static credentials.

- Enable AWS IAM Access Analyzer to continuously detect and remediate overly permissive policies.

Use case: A healthcare SMB implements IAM Identity Center to give staff single sign-on access to AWS accounts. Doctors and nurses are assigned read-only roles for dashboards, developers get scoped access to non-production environments, and security admins have elevated privileges with MFA enforced.

Root accounts are locked with hardware MFA and monitored through CloudWatch alarms. Service applications such as the patient portal use IAM Roles with temporary credentials instead of static keys. IAM Access Analyzer runs continuously to flag any overly broad permissions.

This ensures HIPAA compliance by controlling who can access PHI systems and providing clear evidence during audits.

Step 4: Implement network architecture

After accounts and IAM are in place, the next step is to design a secure and scalable network foundation. AWS recommends using a hub-and-spoke model with a centralized networking account, built on VPCs, Transit Gateway, and VPC peering.

The design should enforce security boundaries, support hybrid connectivity, and provide controlled internet access. Networking decisions here directly affect scalability, performance, and compliance, from how workloads connect internally to how external users access applications.

A well-structured network ensures that future workloads can be added without rework, while meeting compliance standards like encryption in transit and data residency.

How to perform this step using AWS:

- Set up a central AWS networking account to host shared resources such as AWS Transit Gateway, AWS Direct Connect, or AWS Site-to-Site VPN connections.

- Design Amazon VPCs per workload or environment, using subnets split across AWS Availability Zones for resilience.

- Implement segmentation by separating public, private, and restricted subnets, applying AWS Network ACLs (NACLs) and Amazon VPC security groups.

- Use private connectivity with AWS PrivateLink, Amazon VPC endpoints, or AWS Transit Gateway to limit exposure of sensitive workloads to the public internet.

- Centralize outbound internet traffic through shared egress points with AWS Network Firewall, AWS WAF, and Amazon GuardDuty for inspection.

Use case: A healthcare SMB hosting a patient portal and EHR system designs its AWS network with strict compliance needs. A dedicated networking account manages Transit Gateway, which connects separate VPCs for production, development, and shared services.

PHI workloads are placed in private subnets with no direct internet access, while doctors access dashboards through a secure VPN. VPC endpoints and PrivateLink are used for connecting to S3 and DynamoDB without traversing the public internet. Outbound traffic flows through a centralized egress VPC with AWS Network Firewall for inspection.

This architecture ensures HIPAA-compliant segmentation, encrypted traffic flows, and secure hybrid connectivity to the SMB’s on-prem clinic systems.

Step 5: Apply baseline security controls

Once the foundation of accounts, IAM, and networking is in place, the next step is to enforce security baselines across the environment. AWS emphasizes a security-first approach, embedding controls that protect workloads before scaling.

Baseline security ensures that all accounts consistently meet governance, compliance, and audit requirements without relying on ad hoc measures. This involves enabling detective controls, securing access, monitoring activity, and enforcing guardrails.

Establishing these controls early reduces risk, prevents misconfigurations, and simplifies audits for standards such as HIPAA, PCI-DSS, or SOC 2.

How to perform this step using AWS:

- Enable AWS Security Hub to continuously assess accounts against security frameworks (e.g., CIS AWS Foundations Benchmark).

- Activate Amazon GuardDuty, Amazon Inspector, and Amazon Macie for threat detection, vulnerability scanning, and sensitive data monitoring.

- Centralize logs with AWS CloudTrail, AWS Config, and Amazon CloudWatch, applying retention policies to meet compliance requirements.

- Use Service Control Policies (SCPs) in AWS Organizations to restrict unauthorized or high-risk actions across accounts.

- Apply encryption defaults with AWS Key Management Service (AWS KMS) keys and TLS, and enforce multi-factor authentication (MFA) for identity protection.

Use case: A financial SMB moving its loan processing workloads to AWS applies security baselines from day one. AWS Security Hub continuously checks for noncompliant resources, while GuardDuty alerts on unusual login activity. AWS CloudTrail logs are centralized into a dedicated logging account with immutable Amazon S3 storage and Glacier for long-term retention.

SCPs prevent developers from launching unapproved instance types or disabling encryption. Macie scans S3 buckets to ensure no sensitive customer data is exposed. With these controls, the SMB demonstrates compliance with financial regulations while ensuring proactive detection and rapid response to security events.

Step 6: Automate provisioning

After security baselines are established, the next priority is to ensure that all future resources are created consistently and securely. Manual provisioning often leads to drift, misconfigurations, and higher operational overhead.

AWS recommends Infrastructure-as-Code (IaC) and Control Tower to automate account setup, guardrails, and resource deployment. Automation not only accelerates onboarding but also guarantees compliance, cost control, and security are baked into every workload from day one.

This step shifts organizations from ad hoc provisioning to a repeatable, scalable operating model.

How to perform this step using AWS:

- Use AWS Control Tower Account Factory to automatically provision accounts with governance guardrails.

- Define standardized infrastructure templates with AWS CloudFormation or HashiCorp Terraform for networks, IAM roles, and workloads.

- Implement AWS Service Catalog to enable self-service provisioning of approved architectures.

- Apply CI/CD pipelines with AWS CodePipeline and AWS CodeBuild to automate infrastructure deployments and updates.

- Integrate Infrastructure as Code (IaC) templates with AWS Config and AWS Security Hub to continuously validate compliance post-deployment.

Use case: A retail SMB expanding into new regions needs to provision multiple AWS accounts for local teams. Instead of manually creating accounts, they use Control Tower’s Account Factory with pre-approved guardrails.

CloudFormation templates automatically deploy VPCs, IAM roles, and encryption policies in every account. Developers request resources via Service Catalog, ensuring they only launch compliant architectures. CI/CD pipelines deploy updates without downtime, while Config validates every new resource against security policies.

With automation in place, the retail SMB scales confidently, saving time and reducing human error.

Step 7: Enable cost management tools

Even the most secure and scalable landing zone can fail business expectations if costs spiral out of control. AWS recommends embedding cost visibility and accountability early in the foundation.

By enabling cost management tools, organizations ensure resources are tagged, budgets are enforced, and spending is continuously tracked. This step aligns cloud operations with financial governance, giving SMBs predictability and avoiding unexpected overruns.

It transforms cloud adoption from a technical exercise into a financially sustainable operating model.

How to perform this step using AWS:

- Define cost allocation tags and enforce tagging policies through AWS Organizations.

- Enable AWS Budgets to set alerts for overspending or threshold breaches.

- Use AWS Cost Explorer for trend analysis, forecasting, and identifying optimization opportunities.

- Leverage AWS CUR (Cost and Usage Reports) for granular billing insights, integrated with BI tools.

- Apply Service Quotas and SCPs to prevent uncontrolled scaling or resource misuse.

Use case: A healthcare SMB migrating its patient portal to AWS worries about unpredictable billing. They set up tagging policies to separate costs by project (portal, analytics, backup). AWS Budgets sends alerts when monthly spend nears limits, while Cost Explorer highlights underutilized EC2 instances.

By integrating Cost and Usage Reports into QuickSight, finance teams gain detailed dashboards of cloud spend. Service Quotas cap resource growth, ensuring runaway costs never occur. With these controls, the SMB balances compliance-driven workloads with predictable financial outcomes.

Step 8: Validate and iterate

Building a landing zone is not a one-time activity. It is a living framework that must evolve as business priorities, compliance requirements, and AWS services change. AWS emphasizes a cycle of validation and iteration to ensure the foundation remains secure, cost-optimized, and aligned with governance.

Continuous feedback from audits, operations, and business stakeholders helps refine the setup, ensuring the landing zone matures in tandem with the organization’s growth. This step embeds agility and resilience into the cloud journey.

How to perform this step using AWS:

- Use AWS Config and Security Hub to continuously validate security and compliance baselines.

- Run Well-Architected Reviews to identify gaps and apply AWS best practices.

- Enable CloudWatch and CloudTrail for ongoing monitoring and operational validation.

- Integrate Change Management via IaC (CloudFormation/Terraform) for safe, iterative updates.

- Conduct periodic operational and financial reviews to adjust policies, budgets, and resilience targets.

Use Case: A healthcare SMB initially designs its landing zone for HIPAA compliance and patient data management. Over time, new services like AI-driven diagnostics are added, requiring tighter identity controls and updated data retention rules.

AWS Config flags non-compliant S3 buckets, while Security Hub highlights gaps in encryption policies. Quarterly Well-Architected Reviews guide incremental improvements, and Terraform enables consistent updates without manual drift.

By iterating continuously, the SMB ensures its landing zone evolves securely, cost-effectively, and in compliance with healthcare regulations.

Building a secure, compliant, and scalable AWS landing zone is simpler with guidance from an AWS partner like Cloudtech. Beyond technical expertise, a partner ensures governance and compliance are embedded from day one, architectures scale efficiently with business demand, and operational costs remain predictable and optimized.

How does Cloudtech help SMBs build and scale on AWS Landing Zone?

Building in the cloud can feel complex, but starting with a well-architected AWS Landing Zone changes the game. It provides a secure, compliant, and scalable foundation where workloads can grow without surprises. Applications deploy faster, run reliably, and team spends less time firefighting infrastructure issues.

With Cloudtech guiding the way, SMBs can take full advantage of the landing zone: enforcing governance and compliance from day one, automating routine operations, and embedding resilience and monitoring directly into the environment.

This approach lets leaders focus on innovation and growth instead of managing scattered infrastructure, giving small teams the power to compete with much larger organizations confidently and cost-effectively.

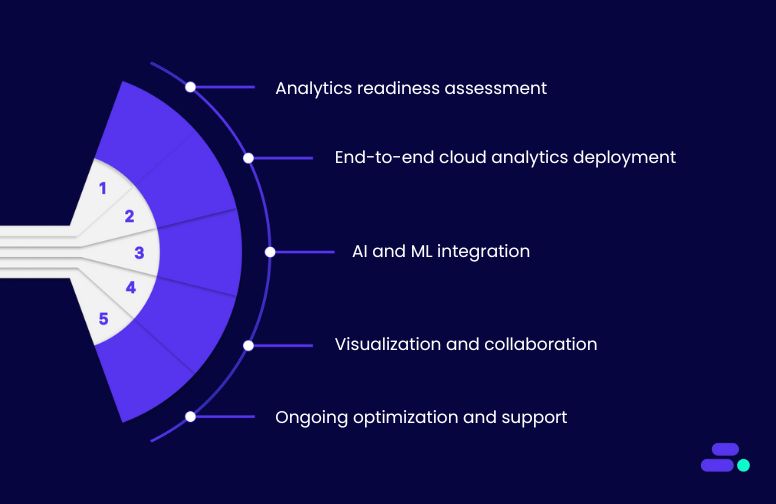

Key Cloudtech services for SMBs to scale on AWS Landing Zones:

- Landing zone assessment & strategy: Review existing cloud or on-prem environments and design a multi-account AWS landing zone aligned with governance, compliance, and business objectives.

- Account provisioning & organization design: Automate account creation and organizational units using AWS Control Tower and AWS Organizations for secure, scalable foundations.

- Identity & access management: Centralize authentication with AWS IAM Identity Center, enforce MFA, and define roles and permission sets aligned with landing zone best practices.

- Network architecture & segmentation: Build secure VPCs, subnets, and connectivity patterns with Transit Gateway, VPC endpoints, and private networking to enforce compliance and reduce exposure.

- Baseline security & compliance automation: Integrate AWS Security Hub, GuardDuty, Config, and Service Control Policies to continuously enforce guardrails and audit-ready configurations.

Through this approach, SMBs can launch features faster, scale confidently, maintain compliance, and ensure high-performing, secure applications while optimizing costs.

See how other SMBs have modernized, scaled, and thrived with Cloudtech’s support →

Wrapping up

With a well-architected AWS Landing Zone, the cloud environment becomes more than infrastructure. It becomes a foundation for growth, resilience, and operational efficiency.

Partnering with an AWS expert like Cloudtech helps SMBs navigate the complexities of multi-account design, governance, and compliance, avoiding costly missteps while accelerating their journey from setup to production-ready workloads.

By combining AWS best practices with an SMB-first approach, Cloudtech ensures that the landing zone is secure, scalable, and ready to support evolving business needs.

Now is the time to lay a strong cloud foundation that future-proofs your operations—Cloudtech can help you get there.

FAQs

1. What makes an AWS Landing Zone different from a standard AWS account?

An AWS Landing Zone provides a pre-configured, multi-account environment with built-in governance, security guardrails, and automation. Unlike a single AWS account, it separates workloads, applies consistent policies, and scales securely as the business grows.

2. How quickly can SMBs deploy an AWS Landing Zone?

With tools like AWS Control Tower and guidance from an AWS partner, SMBs can deploy a secure landing zone in weeks rather than months, depending on complexity, compliance requirements, and account structure.

3. Can an AWS Landing Zone adapt as business priorities change?

Yes. Landing zones are designed to be flexible. Organizations can add accounts, adjust guardrails, and update policies without disrupting existing workloads, ensuring the environment evolves with business needs.

4. How does a landing zone support regulatory compliance?

AWS Landing Zones integrate native tools like Security Hub, GuardDuty, and Config to enforce compliance continuously. Policies and automated audits ensure workloads meet industry regulations such as HIPAA, GDPR, or PCI DSS.

5. Is prior AWS expertise required to implement a landing zone?

While knowledge helps, SMBs can rely on AWS partners like Cloudtech to design, deploy, and manage landing zones. This reduces risk, accelerates setup, and ensures best practices are followed from day one.

How can SMBs choose the right cloud service provider?

Which cloud service provider should a business choose? Their options range from global hyperscalers like AWS, Microsoft Azure, and Google Cloud, to private and hybrid solutions, to specialized vendors serving industries such as healthcare, retail, or finance. Each provider brings its own mix of strengths, trade-offs, and pricing models.

For SMBs, this variety is both a benefit and a challenge. The right provider can deliver scalability, cost predictability, strong security, and modern capabilities such as analytics or AI. The wrong choice, however, can lead to spiraling costs, compliance risks, and limited flexibility.

That’s why choosing the right cloud service provider is no longer a technical decision alone. This article explores the types of providers available and the factors that truly matter, covering how SMBs can position themselves for efficiency, resilience, and long-term competitiveness.

Key takeaways:

- AWS leads with global scale and SMB-focused programs, giving smaller businesses enterprise-level tools.

- Azure excels in Microsoft integration, while Google Cloud offers strong data and AI capabilities.

- Cloud adoption helps SMBs cut costs, scale instantly, and modernize beyond on-prem limitations.

- The right provider depends on business needs, but AWS consistently outperforms in breadth, reliability, and support.

- Working with an AWS partner like Cloudtech ensures a strategic, secure, and growth-ready modernization journey.

Different kinds of cloud service providers available today

Not all cloud service providers are the same, and that’s why choosing the right one matters. Some, like AWS, Azure, and Google Cloud, focus on giving businesses maximum scalability and a wide range of advanced tools. Others provide private or industry-specific clouds, where control, compliance, or customization take priority.

Hybrid and multi-cloud setups add yet another layer of flexibility, letting businesses spread workloads across providers for better reliability and choice. For SMBs, the key is understanding these options at a high level so they can pick a provider that fits their growth, budget, and security needs rather than getting lost in a one-size-fits-all promise.

1. Public cloud providers (AWS, Azure, GCP)

Public cloud providers are the most common choice for SMBs today. They eliminate the need for businesses to maintain physical servers while offering flexible, scalable infrastructure and advanced services. Among them, three players dominate the global market:

2. Private cloud providers (VMware Cloud, IBM Cloud, OpenStack-based)

Private cloud providers give SMBs more control over their data and infrastructure by offering dedicated environments, either on-premises or hosted. They are often chosen by businesses with strict compliance requirements, sensitive data workloads, or the need for highly customized architectures.

While private clouds may lack the elasticity of public providers, they excel in security, governance, and tailored configurations.

3. Hybrid cloud providers (AWS Outposts, Azure Arc, Google Anthos)

Hybrid cloud providers bridge the gap between on-premises infrastructure and the public cloud, giving SMBs the flexibility to run workloads where they perform best, whether that’s in their own data center, at the edge, or in the cloud.

They’re especially valuable for businesses that require low-latency performance, regulatory compliance, or a gradual move to full cloud adoption.

How to choose the right cloud service provider?

The first step in cloud transition is about finding a reliable partner that can grow with them while keeping costs, compliance, and complexity in check. But with so many providers promising scalability, innovation, and security, how can an SMB cut through the noise and make the right choice? Below are the five most important factors to evaluate, explained in clear, practical terms.

1. Scalability & performance: One of the biggest advantages of the cloud is its ability to scale resources on demand, whether that means handling seasonal spikes in customer traffic, or supporting new applications without upfront infrastructure costs.

- Look for providers that offer auto-scaling features (e.g., AWS Auto Scaling, Azure Virtual Machine Scale Sets) so businesses don’t have to predict workloads in advance.

- Global reach matters, especially if customers are spread across regions, a provider with multiple availability zones and low-latency networks (like AWS or Azure) will help deliver faster, more reliable performance.

- SMBs should also evaluate SLAs (Service Level Agreements) to ensure uptime guarantees match their business-critical needs.

2. Cost efficiency & pricing models: Every SMB knows IT costs can spiral if not managed carefully. Cloud pricing can look straightforward, but hidden charges often come from data transfer, storage tiers, or underutilized resources.

- Compare models like pay-as-you-go (flexible but can spike during heavy usage) versus reserved or spot instances (long-term commitments that bring savings).

- Use tools like AWS Cost Explorer, Azure Pricing Calculator, or GCP’s cost management dashboard to forecast monthly bills.

- Always check for hidden costs. For example, data egress fees when moving data out of the provider’s cloud. These can be surprisingly high for growing SMBs.

3. Security & compliance: For SMBs handling sensitive customer data, security can’t be an afterthought. Cloud providers offer strong baseline protections, but not all are equal when it comes to compliance frameworks and regional data residency.

- Ensure the provider supports relevant regulations like HIPAA (healthcare), GDPR (EU businesses), or FINRA (financial services).

- Look for built-in security services such as encryption at rest and in transit, IAM (Identity and Access Management), and continuous monitoring.

- SMBs without in-house security teams should prefer providers with managed security offerings (like AWS GuardDuty or Azure Security Center) to reduce the operational burden.

4. Service ecosystem & innovation: The right cloud provider should not just meet the business needs today but also open doors for tomorrow’s opportunities.

- SMBs looking at AI, analytics, and automation should evaluate how advanced the provider’s ecosystem is. For example, AWS has a huge suite of AI/ML tools, GCP leads in data analytics, and Azure integrates tightly with Microsoft’s productivity stack.

- Consider the breadth of services, from databases and serverless computing to IoT and DevOps pipelines. A richer ecosystem gives business the flexibility to experiment without constantly switching vendors.

- SMBs should prioritize providers that continue to innovate aggressively, ensuring their tech stack won’t feel outdated in two years.

5. Support & ease of management: Even the best cloud services can feel overwhelming without the right support. SMBs typically don’t have large IT teams, so ease of use and responsive support are crucial.

- Look for providers that offer 24/7 customer support, well-documented resources, and SMB-focused support tiers (AWS has Business Support, Azure offers ProDirect, etc.).

- Evaluate the management console and automation tools, since an intuitive dashboard can save countless hours.

- Training and enablement also matter; providers that offer certifications, workshops, and tutorials help SMBs upskill without hiring large teams.

The takeaway for SMBs: Choosing the right cloud service provider isn’t about who has the biggest brand, it’s about who aligns best with the business’ growth stage, industry, and goals. By weighing scalability, costs, security, innovation, and support, SMBs can make a choice that feels less like a risk and more like an investment in the future.

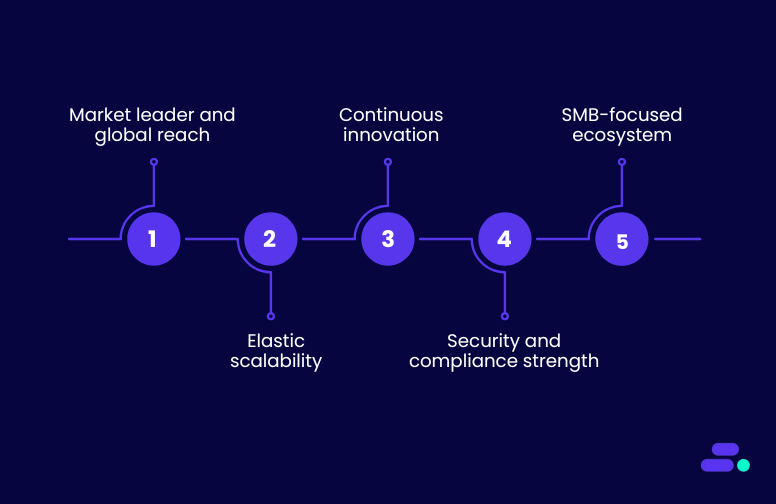

Why is AWS the most preferred cloud service provider?

Among all providers, Amazon Web Services (AWS) consistently stands out as the most trusted choice, with the largest market share of 29%. With its global infrastructure, broad ecosystem of services, and SMB-focused initiatives, AWS gives smaller businesses the tools once reserved only for enterprises, without overwhelming complexity or cost.

The advantages of AWS for SMBs:

- Market leader and global reach: AWS offers the widest global infrastructure footprint, far ahead of Azure and GCP. This ensures low-latency access, high availability, and compliance coverage in virtually any region SMBs operate in.

- Elastic scalability: Unlike Azure, where pricing and scaling can become complex, AWS enables true pay-as-you-go elasticity, so SMBs avoid overprovisioning and only pay for what they need.

- Continuous innovation: While GCP is strong in AI/ML, AWS combines breadth with depth, providing services like Amazon SageMaker, Redshift, and Step Functions that bring advanced AI, analytics, and automation to SMBs with seamless integration into the broader AWS ecosystem.

- Security and compliance strength: AWS maintains the broadest set of certifications (HIPAA, GDPR, SOC, and more). Azure and GCP provide compliance too, but AWS’s scale and maturity make it the most battle-tested for regulated SMB industries like healthcare or finance.

- SMB-focused ecosystem: Beyond the technology, AWS invests directly in SMB success through the AWS Small Business Acceleration initiative and a robust partner network (like Cloudtech). This level of SMB-first support is less emphasized by Azure and GCP, which remain more enterprise-focused.

In short: AWS outperforms competitors by blending global reliability (where Azure lags), innovation at scale (beyond GCP’s niche strengths), and a partner ecosystem designed specifically for SMB growth.

While AWS provides the foundation, navigating its vast ecosystem can feel overwhelming for SMBs with lean IT teams. This is where having an AWS Partner like Cloudtech becomes invaluable.

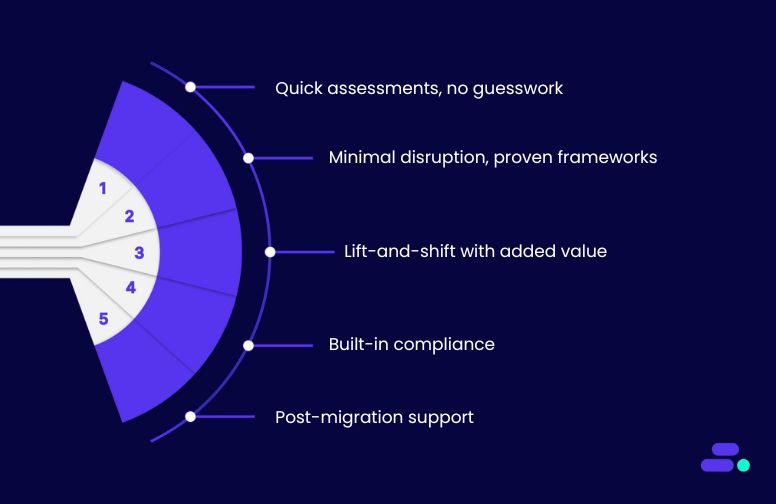

How does Cloudtech ensure a frictionless move to the cloud?

The fear of downtime, complexity, and disruption can make cloud migration feel daunting. Cloudtech’s approach is designed to remove this friction, making the journey to AWS smooth, predictable, and low-risk.

By combining proven migration frameworks with SMB-focused strategies, Cloudtech helps businesses modernize without interrupting daily operations.

Ways Cloudtech reduces friction in cloud migration:

- Lightweight assessments, zero guesswork: Cloudtech starts with a quick but thorough infrastructure and workload assessment, identifying cost drains, risks, and migration priorities. This ensures a clear roadmap without lengthy audits that slow progress.

- Minimal disruption with proven frameworks: Using AWS-native tools like Migration Hub and Application Migration Service, Cloudtech executes migrations in phases, so workloads transition securely with minimal downtime and no data loss.

- Lift-and-shift plus value-add: For speed, workloads are moved “as-is” where practical, but Cloudtech also enables cloud-native enhancements like analytics, automation, or AI, so SMBs get more than just a lift-and-shift.

- Compliance and resilience built-in: Migration isn’t just about moving workloads, but about upgrading them. Cloudtech bakes in multi-AZ redundancy, backup automation, and compliance controls from day one, avoiding costly retrofits later.

- Post-migration stability and support: The journey doesn’t end at migration. Cloudtech provides ongoing optimization, cost governance, and support, ensuring SMBs can adopt new capabilities at their own pace while keeping systems stable.

With Cloudtech, SMBs don’t just move to the cloud. They get there faster, with fewer roadblocks, and with a future-ready foundation that fuels innovation.

See how other SMBs have modernized, scaled, and thrived with Cloudtech’s support →

Wrapping up

Choosing AWS gives SMBs access to the world’s most trusted cloud platform, with unmatched scalability, a broad service ecosystem, and global reach. Partnering with Cloudtech ensures this move is about modernization. It designs cloud-native architectures that are lean, secure, compliant, and tailored to SMB growth, helping businesses move beyond rigid legacy systems and into a foundation built for efficiency and competitiveness.

With Cloudtech, SMBs gain the freedom to embrace a cloud foundation that fuels efficiency and competitiveness. Connect with Cloudtech today!

FAQs

1. Do cloud providers lock SMBs into long-term contracts?

No. Most providers, including AWS, Azure, and Google Cloud, operate on a pay-as-you-go model. However, businesses can choose reserved or committed plans for bigger discounts. The key is balancing flexibility with cost savings.

2. How does cloud performance differ across providers?

Performance can vary based on regional data center presence, network architecture, and services. For example, AWS has the widest global infrastructure footprint, while Azure has strong enterprise integration, and GCP often shines in workloads needing heavy data analytics.

3. What role does support play when choosing a provider?

Each provider offers different tiers of support, but many SMBs find these costly or complex to navigate. A certified partner like Cloudtech can fill this gap by providing personalized, ongoing support without the enterprise-level overhead.

4. Can different providers be used together (multi-cloud)?

Yes. Many SMBs adopt a multi-cloud approach. For example, using AWS for core infrastructure while leveraging Google Cloud for analytics or Azure for Microsoft integrations. This offers flexibility but requires careful management to avoid complexity.

5. How fast can SMBs get started with a cloud provider?

Technically, provisioning resources is instant. The real timeline depends on migration planning, application readiness, and compliance checks. With expert guidance from partners, SMBs can often go live in weeks rather than months.

Harnessing cloud native application development for faster innovation

Why do traditional applications struggle to keep up with modern business demands? The main reason is scaling, which requires adding more servers or hardware manually. Innovation is costly and time-consuming. Cloud native application development changes this using the cloud’s flexibility, automation, and distributed architecture.

Consider a small fintech startup managing a legacy payment platform. Deploying new features takes weeks, and scaling to handle spikes in transactions risks outages or performance issues. With cloud native development, applications are built as modular, containerized services that scale automatically, update seamlessly, and integrate with advanced tools like AI or analytics. This allows teams to deliver new features faster, maintain high reliability, and respond to market changes without heavy IT overhead.

This article explores why cloud native application development is essential for SMBs seeking speed, agility, and scalable innovation in today’s digital-first world.

Key takeaways:

- Cloud-native applications help SMBs innovate faster with built-in scalability, security, and automation.

- Modern app development enables seamless integration with AI, analytics, and legacy systems.

- Pay-as-you-go cloud models reduce upfront investment and align costs with actual usage.

- With cloud apps, SMBs can focus on business growth instead of infrastructure management.

- Partnering with an AWS expert like Cloudtech ensures compliant, resilient, and future-ready applications.

From monoliths to microservices: Understanding cloud application development

Traditional applications are typically built as monoliths, where all components are bundled together in a single codebase. While this structure may have worked in the past, it creates several technical bottlenecks.

Deploying a small change often requires rebuilding and redeploying the entire application, scaling a single component (like payment processing or patient record retrieval) means scaling the whole system, and a failure in one module can bring down the entire app. For SMBs, this translates to longer release cycles, higher maintenance costs, and greater downtime risk.

Cloud native development breaks applications into microservices, with independently deployable modules, each responsible for a specific business function. These microservices communicate via lightweight APIs and can be containerized using tools like Docker and orchestrated with Kubernetes or Amazon ECS/EKS.

The benefits include:

- Independent scaling: Each service can scale based on demand without affecting others, saving costs and improving performance.

- Faster updates and deployment: Teams can release, test, and rollback individual services independently, reducing downtime and speeding up innovation.

- Resilience: If one microservice fails, the rest of the application continues to function, improving reliability and uptime.

- Tech stack flexibility: Different services can use the most suitable programming languages or frameworks, letting SMBs experiment and adopt new technologies faster.

Transitioning from a monolithic to a microservices architecture allows SMBs to achieve operational agility, cost efficiency, and faster time-to-market.

How can SMBs develop cloud native applications using AWS?

Cloud native application development lets SMBs build applications specifically for the cloud using modular, containerized services that scale independently, deploy rapidly, and integrate effortlessly. This approach reduces downtime, simplifies updates, and enables automation, resiliency, and observability. These are benefits that apply to any application, from healthcare to fintech.

Take the example of developing a fintech app designed to handle digital payments, manage customer accounts, and provide real-time financial insights. This platform would enable users to transfer funds, track balances, generate transaction reports, and receive personalized analytics, all while ensuring security, compliance, and high availability.

Step 1: Define application requirements and goals

Before building the fintech app, the SMB must clearly define its functional and non-functional requirements, performance expectations, and compliance obligations.

Key considerations include:

- Core functionality: The platform should enable secure online payments, account management, transaction history tracking, and reporting dashboards for both users and administrators.

- Regulatory compliance: The application must adhere to PCI DSS for payment data, GDPR for personal data protection, and any relevant local financial regulations.

- Scalability targets: The system should support an initial user base of 10,000+, with seamless scaling during peak usage or rapid growth.

- Availability and resilience: Uptime targets (e.g., 99.9% SLA) and disaster recovery requirements, including multi-AZ deployment, should be established.

- Security and monitoring: Logging, auditing, and threat detection protocols should be defined to protect sensitive financial data.

AWS tools to use:

- AWS Well-Architected Tool: Evaluates cloud architecture against five pillars: security, reliability, performance efficiency, cost optimization, and operational excellence. It highlights risks, suggests improvements, and helps SMBs align applications with best practices for scalable and maintainable solutions.

- AWS Cloud Adoption Framework (CAF): Guides organizations through cloud adoption by mapping business and technical capabilities to six perspectives: business, people, governance, platform, security, and operations. It identifies skill gaps, governance needs, and compliance requirements for a holistic migration and development strategy.

- AWS Trusted Advisor: Provides real-time recommendations on cost optimization, performance, security, fault tolerance, and service limits. SMBs can use it to fix misconfigurations, reduce overspending, improve resiliency, and optimize workloads before deployment or scaling.

Defining these requirements allows the SMB to establish a strong foundation, minimizing risks and ensuring a scalable, compliant, and efficient cloud-native application.

Step 2: Architect the app as microservices

Once requirements are defined, the SMB designs the application using a microservices architecture, breaking the platform into modular, independently deployable components. Each service focuses on a specific business capability, which improves scalability, maintainability, and fault isolation.

Core microservices might include:

- Payment processing service: Handles all transactions securely, integrates with payment gateways, and manages transaction validation.

- Account management service: Maintains user profiles, authentication, and authorization workflows.

- Analytics service: Collects and analyzes usage patterns, detects potential fraud, and provides actionable insights for decision-making.

AWS tools to implement microservices:

- Amazon ECS/Amazon EKS: Run containerized microservices in a fully managed, scalable environment. ECS provides simple container orchestration, while EKS leverages Kubernetes for advanced orchestration, enabling SMBs to deploy, scale, and manage services efficiently.

- AWS Lambda: Executes serverless functions for lightweight, event-driven tasks such as real-time fraud detection, notifications, or data transformations. It eliminates the need to manage servers and scales automatically with demand.

- Amazon API Gateway: Offers secure, fully managed APIs for communication between microservices and external clients. It supports request throttling, authentication, and monitoring, ensuring reliable and controlled access.

- Amazon SQS/SNS/EventBridge: Provide asynchronous messaging and event-driven communication. SQS queues messages for processing, SNS broadcasts notifications, and EventBridge routes events across services, decoupling components and enhancing reliability.

Decomposing the fintech platform into microservices and using AWS services enables SMBs to update, scale, and deploy features independently, reducing downtime and accelerating innovation.

Step 3: Build and containerize services

After architecting the fintech platform, each microservice is developed and packaged independently to enable agile development and seamless deployment. This ensures that updates to one service do not disrupt others, while maintaining consistent performance and reliability.

Examples include:

- Payment processing service: Packaged in a Docker container to ensure portability and consistent runtime across environments.

- Analytics service: Encapsulates Python code and ML models within a container for automated data processing and fraud detection.

- Testing pipelines: Each microservice has its own testing workflow, ensuring quality and isolating issues before deployment.

AWS tools for containerization and CI/CD:

- AWS CodeBuild: Provides fully managed build services to compile source code, run tests, and produce container images for each microservice, ensuring fast, repeatable, and isolated builds.

- AWS CodeArtifact: Acts as a secure artifact repository that stores, versions, and shares dependencies across teams, preventing conflicts and ensuring compliance with governance policies.

- AWS CodePipeline: Automates the end-to-end CI/CD workflow, integrating with CodeBuild, testing stages, and deployment targets so each microservice can be released independently and reliably.

Containerizing services and establishing CI/CD pipelines with AWS tools allows SMBs to release updates faster, reduce operational risk, and maintain high availability for their fintech platform.

Step 4: Set up CI/CD and automation

To ensure updates are deployed reliably and safely, the fintech startup implements automated CI/CD pipelines and deployment strategies. This allows the team to test and release new features without impacting live services, maintaining high availability for end users.

Examples include:

- Isolated testing: Payment features and other critical updates are tested independently before deployment, reducing the risk of bugs affecting the platform.

- Blue/Green deployments: Critical services like payment processing leverage blue/green strategies to switch traffic seamlessly between environments, minimizing downtime and operational risk.

AWS tools for CI/CD and automation:

- AWS CodePipeline + CodeDeploy: Automates build, test, and deployment workflows, using blue/green or canary strategies to update microservices with minimal downtime and controlled rollouts.

- AWS CloudFormation/AWS CDK: Enables infrastructure as code, allowing teams to define, version, and consistently provision AWS resources across environments with repeatability and governance.

- AWS X-Ray: Provides distributed tracing to track requests through microservices, helping pinpoint performance bottlenecks, errors, and latency issues for faster debugging and root cause analysis.

Combining automated CI/CD pipelines with AWS’s deployment and tracing tools allows SMBs to safely roll out updates, scale confidently, and maintain uninterrupted service for their fintech platform.

Step 5: Implement observability and monitoring

For a fintech platform, maintaining real-time visibility into operations is critical. SMBs must detect and respond to errors, latency issues, or suspicious activity immediately to protect both users and business reputation. Key practices include:

- Transaction and service monitoring: Track payment processing errors, service response times, and API failures to ensure smooth operations.

- Alerts and notifications: Configure alerts for failed jobs, unusual transaction patterns, or potential fraud, enabling rapid response.

AWS services for observability and monitoring:

- Amazon CloudWatch: Collects and monitors metrics, logs, and events across microservices, enabling real-time visibility, custom dashboards, and automated alarms.

- AWS X-Ray: Provides distributed tracing to visualize request flows, identify latency hotspots, and diagnose errors across interconnected services.

- Amazon SNS: Delivers instant notifications or alerts to operations teams when thresholds or anomalies are detected, ensuring rapid incident response.

Implementing comprehensive observability with AWS tools enables SMBs to maintain high reliability, detect problems early, and ensure a secure, seamless fintech experience for users.

Step 6: Ensure security and compliance

Safeguarding sensitive financial data is critical. Security isn’t optional, but foundational to trust and regulatory compliance.

Key practices include:

- Data protection: Encrypt all user data both at rest and in transit to prevent unauthorized access.

- Access control: Apply least privilege policies so that users and services can only access what they absolutely need.

- Continuous auditing: Regularly audit configurations and monitor compliance with financial regulations like PCI DSS and GDPR.

AWS tools for security and compliance:

- AWS Identity and Access Management (IAM): By enforcing the principle of least privilege, IAM ensures each user or service has only the access needed to perform its tasks, reducing the risk of unauthorized access.

- AWS Key Management Service (KMS): SMBs can encrypt sensitive data at rest and in transit across databases, S3 buckets, and microservices, ensuring regulatory compliance and data confidentiality.

- AWS Shield & AWS WAF: AWS Shield provides managed protection against DDoS attacks, while AWS WAF allows SMBs to define custom rules to block malicious traffic at the application layer. Together, they safeguard fintech applications from external threats without impacting performance.

- AWS Config & Security Hub: AWS Config tracks configuration changes, while Security Hub aggregates alerts and provides actionable insights, helping SMBs maintain security posture and meet audit requirements efficiently.

These tools ensure that the cloud-native fintech applications are secure, compliant, and resilient without excessive manual overhead.

Step 7: Scale and optimize

After deploying the fintech application, SMBs need to ensure it can handle growth, spikes in traffic, and changing workloads efficiently.

Scaling and optimization involve both performance management and cost control:

- Dynamic scaling: Automatically adjust compute resources for microservices like payment processing or analytics based on real-time demand. This ensures the app remains responsive even during peak transaction periods.

- Cost optimization: Mix on-demand and spot instances for non-critical workloads, such as analytics or batch processing, to reduce operational costs without impacting performance.

- Resource Efficiency: Continuously monitor usage patterns and optimize infrastructure to avoid overprovisioning.

AWS services for scaling and optimization:

- Auto Scaling Groups: Dynamically adjust Amazon EC2 capacity based on demand, ensuring applications remain performant while minimizing costs.

- ECS/EKS Service Auto Scaling: Scales containerized workloads automatically using service-level metrics, maintaining reliability during traffic spikes or drops.

- AWS Lambda: Executes event-driven functions that scale seamlessly with workload volume, eliminating the need for manual provisioning.

- AWS Cost Explorer & Trusted Advisor: Provide visibility into usage patterns, cost forecasting, and actionable recommendations to optimize performance and reduce unnecessary spend.

Implementing these AWS tools allows SMBs to maintain high availability, ensure consistent user experience, and control costs while growing their cloud-native fintech application efficiently.

Step 8: Continuous improvement

Building a cloud-native fintech application is not a one-time effort. Continuous improvement ensures the platform evolves with customer needs, regulatory changes, and technological advances. SMBs can adopt iterative enhancements while keeping the app reliable and secure.

Key practices for continuous improvement:

- AI and machine learning: Integrate predictive features like fraud detection or credit risk scoring using Amazon SageMaker, enabling smarter, automated decision-making.

- Workflow automation: Streamline repetitive tasks such as payment reconciliation, notifications, or report generation with AWS Step Functions to reduce manual errors and operational overhead.

- Data-driven insights: Build dynamic dashboards and analytics reports with Amazon QuickSight to visualize user behavior, transaction trends, and operational KPIs, guiding strategic decisions.

These AWS cloud-native services allow SMBs to continuously enhance their cloud application, stay competitive, and deliver a better customer experience without the friction of traditional software update cycles.

Outcomes for the SMB:

- New payment features are deployed weekly instead of monthly.

- Transactions are processed reliably even during spikes.

- Data security and compliance are built in from day one.

- Operational costs are optimized, and infrastructure scales automatically.

Reaching such outcomes is easy for SMBs from regulated sectors with the guidance of a specialized AWS partner like Cloudtech. Beyond technical know-how, a partner ensures security and compliance are integrated from the start, architectures scale predictably with demand, and operational costs stay optimized.

How does Cloudtech help SMBs build and scale cloud-native applications?

Developing cloud applications is about designing systems that are scalable, secure, and built to evolve with business needs. This ensures faster releases, reduced operational overhead, and applications that perform reliably at scale.

Cloudtech’s strength lies in its SMB-first approach. It helps teams design apps that balance lean budgets with high performance, automates deployment pipelines to accelerate time-to-market, and embeds compliance and resilience into the application lifecycle.

Beyond launch, Cloudtech provides ongoing support so SMBs can continuously innovate and compete effectively with larger players.

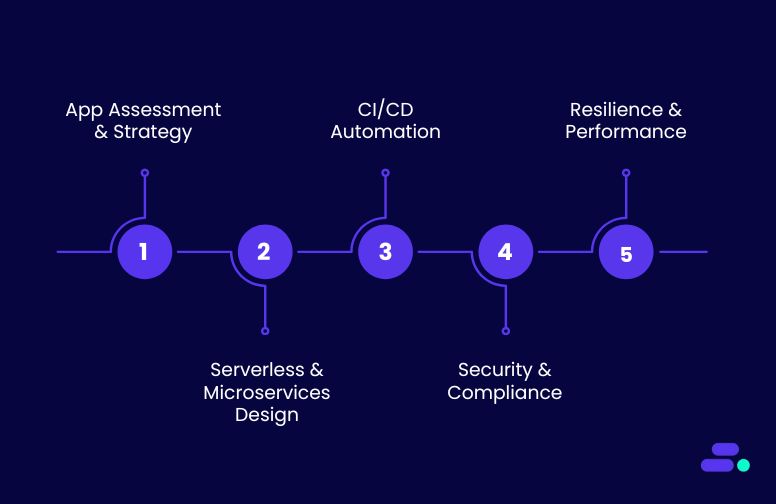

Key Cloudtech services for cloud application development:

- Application assessment & strategy: Cloudtech reviews existing applications and identifies opportunities to modernize with AWS-native services, ensuring architectures align with business goals.

- Serverless & microservices design: Using AWS Lambda, ECS/EKS, and event-driven patterns, Cloudtech builds applications that scale seamlessly while reducing infrastructure overhead.

- CI/CD automation: With tools like AWS CodePipeline and CodeBuild, Cloudtech sets up automated pipelines for faster, more reliable deployments.

- Integrated security & compliance: From IAM best practices to data encryption, Cloudtech embeds security controls and regulatory compliance directly into applications.

- Resilience & performance optimization: Applications are architected with multi-AZ redundancy, monitoring, and auto-scaling to ensure high availability and smooth user experiences.

Through this approach, SMBs gain the ability to launch new features quickly, scale confidently, and deliver secure, high-performing applications, all while keeping costs under control and staying focused on growth.

See how other SMBs have modernized, scaled, and thrived with Cloudtech’s support →

Wrapping up

With the right architecture, automation, and security, applications become more than tools; they become drivers of growth, resilience, and customer satisfaction. Partnering with an AWS expert like Cloudtech helps SMBs bypass the steep learning curve, avoid costly missteps, and accelerate their journey from idea to production-ready applications.

By combining AWS best practices with an SMB-first mindset, Cloudtech ensures that every application is designed to scale, adapt, and deliver lasting value.

Now is the time to modernize your applications and future-proof your business—Cloudtech can help you get there.

FAQs

1. How long does it typically take to build a cloud-native application for an SMB?

Timelines vary depending on complexity, but many SMBs see a minimum viable product (MVP) within weeks. Cloud-native services and serverless components accelerate delivery compared to traditional development.

2. Can cloud applications integrate with existing legacy systems?

Yes. Cloud-native apps can be designed with APIs and event-driven architectures that connect seamlessly to on-prem or older systems, enabling gradual modernization instead of a disruptive overhaul.

3. What security measures are built into cloud application development?

Cloud apps are designed with encryption, identity and access management (IAM), compliance controls, and continuous monitoring baked in from the start, ensuring protection of sensitive customer and financial data.

4. How do SMBs control costs when developing on the cloud?

Using a pay-as-you-go model, SMBs only pay for the resources they use. Cost optimization tools like AWS Trusted Advisor and Cost Explorer help keep budgets on track while avoiding over-provisioning.

5. Do SMBs need in-house cloud expertise to maintain cloud applications?

Not necessarily. With managed services, automated scaling, and the support of an AWS partner like Cloudtech, SMBs can run and evolve their applications without needing large in-house cloud teams.

Minimizing downtime with AWS availability zones

Consider a regional healthcare clinic that relies on a digital patient management system for appointments, prescriptions, and access to medical history. Now picture one data center suddenly going offline due to a hardware failure or cooling system breakdown. If everything were tied to that single site, doctors and staff would instantly lose access, delaying care and putting patients at risk.

With availability zones (AZ), that risk is minimized. Each AZ is an isolated data center with its own power, cooling, and networking. By spreading workloads across multiple AZs, AWS ensures that even if one fails, the others continue seamlessly. For SMBs in healthcare, this means uninterrupted access to critical applications, stronger compliance with patient safety standards, and the assurance that downtime won’t get in the way of care delivery.

This article explores how AZs work, why they matter for SMBs, and how businesses can leverage them to ensure reliability, scalability, and uninterrupted operations.

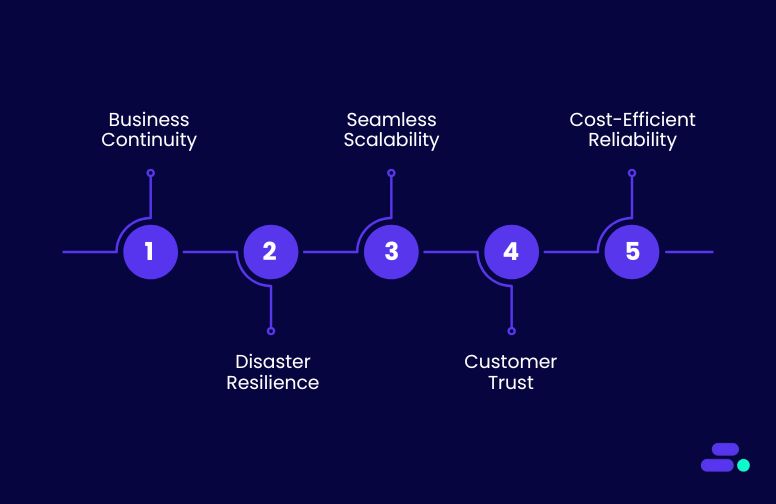

Key takeaways:

- Business continuity is built-in: AZs ensure applications and data remain accessible even if one zone experiences issues, reducing disruption.

- Disaster resilience at scale: Geographic separation of AZs protects SMBs from localized outages, natural disasters, or infrastructure failures.

- Seamless scalability: Workloads can be distributed across multiple AZs to handle traffic spikes efficiently, keeping performance consistent.

- Cost-effective reliability: AWS’s pay-as-you-go model makes high availability and fault tolerance accessible without overprovisioning or excess spend.

- Foundation for resilient architecture: AZs allow SMBs to design cloud-native, multi-zone systems that balance performance, redundancy, and compliance.

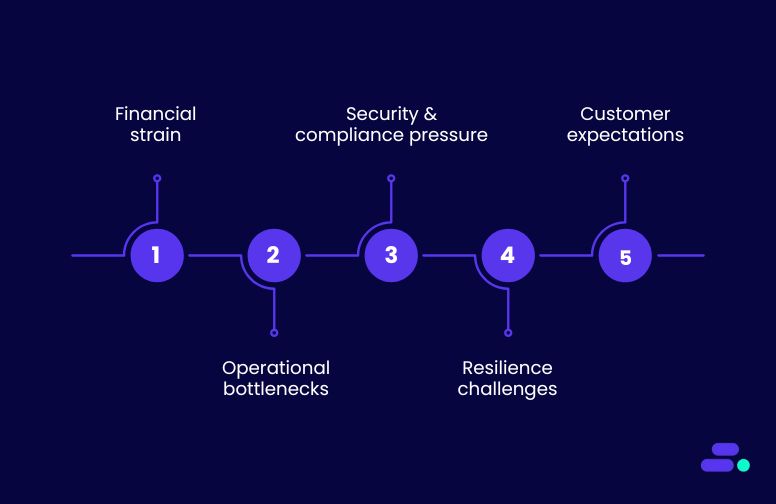

5 reasons SMBs can’t afford to ignore availability zones

For SMBs, even a short period of downtime can mean lost sales, eroded customer trust, or compliance risks. Traditional infrastructures often lack built-in redundancy, leaving smaller businesses vulnerable when something goes wrong.

AZs change this equation by offering fault-tolerant, geographically separated data centers that keep applications and data running without interruption. For SMBs aiming to compete in a digital-first market, availability zones provide the resilience and reliability that on-prem systems and single-location hosting can’t match:

1. Business continuity without disruption

Staying online isn’t just about convenience, but about survival. For SMBs, even an hour of downtime can ripple across operations and customer trust. Running applications across multiple AZs ensures continuity by minimizing single points of failure.

Why this matters for SMBs:

- Customer trust: Clients expect uninterrupted access, whether it’s logging into an app, placing an order, or accessing sensitive data.

- Revenue protection: Downtime means missed sales opportunities and operational slowdowns.

- Compliance and credibility: Many industries demand resilient systems; continuity shows the business is reliable and future-ready.

Use case: A regional e-commerce business runs its online storefront and inventory system across three AWS Availability Zones. When one zone faces a hardware failure, traffic is automatically rerouted to the other zones. Customers keep shopping without ever noticing the disruption, while the business avoids lost revenue and urgent IT firefighting.

2. Disaster resilience at scale

A local disaster can threaten the very survival of the business. AZs are built to withstand these challenges by being physically separate yet interconnected, ensuring workloads and data remain protected.

Why this matters for SMBs:

- Local incident protection: Floods, fires, or power failures in one location won’t wipe out operations.

- Data safety: Replication across AZs safeguards critical business data from being lost.

- Peace of mind: Owners and teams can focus on growth instead of worrying about “what if” scenarios.

Use case: A healthcare SMB stores patient records and appointment systems across multiple AWS Availability Zones. When a severe storm causes a power outage in one zone, the system automatically fails over to another AZ. Doctors still access patient records in real time, ensuring uninterrupted care while the outage is resolved behind the scenes.

3. Seamless scalability

SMBs often face unpredictable growth, whether it’s seasonal demand, a viral campaign, or a sudden increase in customers. With AZs, scaling no longer requires overbuying servers or risking performance dips. Workloads can be distributed intelligently, so customers always experience reliable service.

Why this matters for SMBs:

- Traffic flexibility: SMBs can manage sudden traffic surges without the need to overprovision resources in advance.

- Cost efficiency: Instead of paying for idle servers that may only be used occasionally, businesses only pay for the capacity they actually consume.

- Customer experience: Even during peak demand, applications remain fast and reliable, ensuring customers enjoy a smooth, frustration-free interaction.

Use case: An e-commerce SMB runs its website and order systems across multiple AWS AZs. During a festive sale, traffic surges threefold. Instead of crashing or slowing down, the workload is spread across zones, ensuring customers browse, order, and pay without disruption, boosting sales and brand trust.

4. Improved customer trust

Consistency is the cornerstone of trust. When customers, patients, or partners know they can always access the systems and data they depend on, confidence in the business naturally grows.

Why it matters

- Reliability builds credibility: Uptime isn’t just a technical metric—it directly affects how clients perceive professionalism and dependability.

- Data accessibility ensures confidence: Assuring customers that their data will remain safe and available reinforces loyalty.

- Partnerships thrive on stability: Reliable systems make SMBs stronger collaborators for vendors, healthcare providers, and service partners.

Use case: A regional healthcare provider runs its electronic health records (EHR) platform across multiple AWS Availability Zones. Even if one zone experiences a disruption, doctors and patients still access records without delay. This consistency reinforces patient trust, assuring them that their sensitive medical data is always available and protected.

5. Cost-efficient reliability

High availability is often associated with enterprise-level budgets, but AWS flips this narrative. Its pay-as-you-go model allows SMBs to deploy fault-tolerant, multi-zone architectures without overspending, making resilience both practical and affordable.

Why it matters

- Enterprise-grade reliability at SMB cost: Businesses can achieve the same redundancy strategies as large enterprises without massive upfront investments.

- No wasted resources: With elastic scaling, SMBs only pay for what they use, avoiding the trap of idle backup infrastructure.

- Predictable costs: Transparent pricing enables SMBs to balance resilience and affordability, removing the fear of hidden IT expenses.

Use case: A growing e-commerce SMB distributes its online storefront across multiple AWS Availability Zones. During holiday sales, the system scales to handle surging traffic, then automatically scales back down once demand subsides. The company pays only for the extra capacity it needs in peak periods, delivering uninterrupted shopping experiences without straining its IT budget.

How can SMBs establish a resilient cloud infrastructure with AWS Availability Zones?

The AWS Cloud is designed with global resilience at its core. Today, it spans 117 Availability Zones (AZs) across 37 Geographic Regions, with plans already underway to add 13 more AZs and 4 new Regions in New Zealand, the Kingdom of Saudi Arabia, Chile, and the AWS European Sovereign Cloud. Each Availability Zone is a physically separate, independent facility with its own power, cooling, and networking, yet closely interconnected with low-latency links.

For SMBs, this global footprint means they can build applications that are not just highly available but also closer to their customers, whether that’s in healthcare, retail, finance, or manufacturing. It ensures that critical workloads can withstand local disruptions while continuing to deliver seamless service, all without the burden of building and maintaining their own physical data centers.

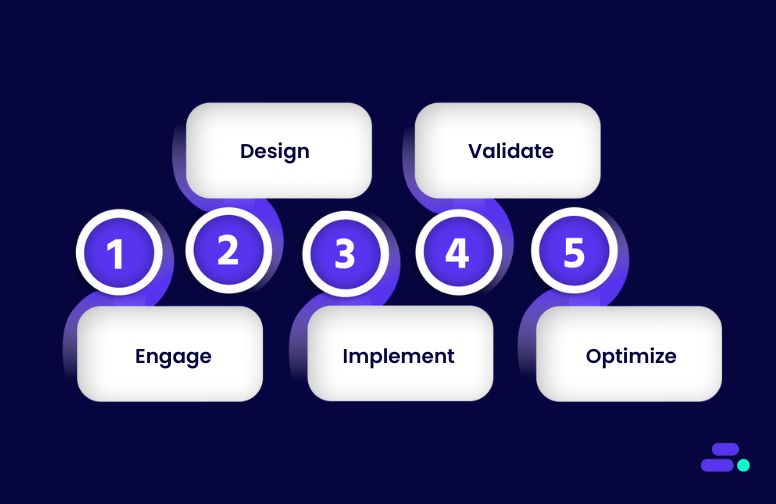

Here’s how SMBs can establish a strong cloud infrastructure based on this framework of availability zones:

1. Engage: Define resilience goals

Begin by identifying what “always on” means for the business. For some SMBs, it may mean keeping customer-facing applications running 24/7 without interruption. For others, it could mean maintaining compliance and data integrity in industries like healthcare or finance. These clear goals help shape the right multi-AZ strategy.

AWS actions to take in this step:

- Use AWS Well-Architected Framework (Reliability Pillar) to assess business continuity needs.

- Enable AWS Trusted Advisor to review fault tolerance, service limits, and security gaps.

- Map workloads against AWS Regions and Availability Zones, deciding whether to run active-active (across multiple AZs simultaneously) or active-passive (primary with failover) setups.

- If compliance is a driver, align with AWS Artifact for on-demand compliance documentation (e.g., HIPAA, SOC, PCI).

2. Design: Distribute workloads across AZs

Architect applications to run across two or more AWS Availability Zones (AZs) within a region. This geographic separation protects against localized outages such as hardware failures, power disruptions, or natural disasters, ensuring services remain online and responsive.

AWS actions to take in this step:

- Deploy compute workloads using Amazon EC2 Auto Scaling Groups across multiple AZs.

- Use Elastic Load Balancing (ELB) to automatically route traffic between healthy instances across zones.

- For databases, enable Amazon RDS Multi-AZ deployments or Amazon Aurora Global Database to replicate data across zones seamlessly.

- Store critical data in Amazon S3, which automatically replicates across multiple AZs within a region.

- Design event-driven components with Amazon SQS, SNS, or EventBridge to ensure message durability and cross-AZ delivery.

3. Implement: Use AWS services with built-in multi-AZ support

Rather than manually engineering fault tolerance, SMBs can lean on AWS managed services that natively replicate data, route traffic, and balance workloads across multiple Availability Zones. This ensures resiliency without adding operational complexity, allowing IT teams to focus on business innovation instead of firefighting outages.

AWS actions to take in this step:

- Use Amazon RDS Multi-AZ or Amazon Aurora to get automatic synchronous replication and failover between zones.

- Store data in Amazon S3, which is designed for 11 nines (99.999999999%) of durability by replicating across multiple AZs.

- Deploy Elastic Load Balancing (ALB/NLB) to intelligently distribute requests to healthy instances across zones.

- Run applications on Amazon ECS or Amazon EKS, with tasks and pods distributed across AZs for high availability.

- Configure Amazon Route 53 with health checks and failover policies for resilient DNS routing.

4. Validate: Test the failover strategy

Even the best-designed multi-AZ setup can fall short if it hasn’t been tested under real-world failure conditions. Validation gives SMBs confidence that their workloads will actually recover when disruption strikes. By simulating outages, businesses can uncover blind spots before customers ever notice a problem.

AWS actions to take in this step:

- Configure Amazon Route 53 with health checks and automatic failover policies so traffic reroutes instantly to healthy resources when one AZ becomes unavailable.

- Use the AWS Fault Injection Simulator (FIS) to create controlled failure experiments (e.g., shutting down an EC2 instance or disconnecting a database in one AZ) and validate system responses.

- Enable Amazon CloudWatch Alarms and AWS CloudTrail logs to monitor health and ensure automated failover triggers are working as intended.

- Schedule periodic “game day” exercises where teams intentionally simulate an AZ outage to verify that business continuity plans work end-to-end.

5. Optimize: Balance reliability with cost-efficiency

Resilience doesn’t have to break the budget. Once workloads are running reliably across Availability Zones, the next step is fine-tuning for efficiency. SMBs can avoid overspending by using AWS’s pricing models and scaling tools to match capacity with actual demand, ensuring business continuity without waste.

AWS actions to take in this step:

- Configure Amazon EC2 Auto Scaling or ECS/EKS Auto Scaling so workloads adjust dynamically to traffic, keeping services highly available without overprovisioning.

- Blend On-Demand Instances (for steady workloads) with Spot Instances (for burst or flexible jobs) to reduce compute costs while maintaining performance.

- Use AWS Cost Explorer and AWS Budgets to continuously monitor cloud spend, identify cost-saving opportunities, and prevent bill surprises.

- Leverage Amazon S3 Intelligent-Tiering to automatically shift data between storage classes based on usage patterns, reducin costs without sacrificing durability.

These steps can help SMBs move beyond traditional IT limitations and build cloud systems that are not only resilient but also cost-effective, giving them the confidence to grow without fearing downtime.

While the steps are clear, many SMBs lack the in-house expertise to design and optimize multi-AZ architectures. AWS Partners like Cloudtech bring certified architects, proven blueprints, and hands-on experience to help businesses implement resilience strategies faster.

How does Cloudtech help SMBs reap the benefits of AWS Availability Zones?

Making the most of AWS Availability Zones can feel overwhelming, as balancing redundancy, failover, and cross-AZ replication is not easy for small teams. Cloudtech guides SMBs through the process, setting up resilient multi-AZ architectures with EC2, RDS/Aurora, S3, and Route 53.

They handle failover, traffic routing, and fault tolerance while keeping costs in check, so businesses get rock-solid uptime and scalability without the headache of managing it themselves.

Ways Cloudtech maximizes AZ benefits for SMBs:

- Assessment and AZ strategy: Cloudtech begins by understanding each SMB’s uptime, compliance, and performance goals. This informs a tailored multi-AZ deployment plan that balances resilience and cost.

- Seamless architecture design: Workloads are distributed across multiple AZs using best practices for compute (EC2, ECS, EKS), storage (S3, EFS), and databases (RDS, Aurora).

- Built-in automation and failover: Using AWS services like Elastic Load Balancing and Route 53, Cloudtech configures automatic traffic routing and failover, so SMB applications stay responsive under any conditions.

- Testing and validation: Cloudtech leverages tools like AWS Fault Injection Simulator to simulate outages and confirm that failover strategies work as intended before real-world deployment.

- Ongoing optimization: Post-deployment, Cloudtech monitors usage, scales resources, and manages costs through a mix of on-demand, reserved, and spot instances, keeping high availability affordable for SMBs.

With Cloudtech, SMBs don’t just gain access to AWS AZs. They get a frictionless, resilient cloud foundation that ensures applications remain online, secure, and cost-efficient.

See how other SMBs have modernized, scaled, and thrived with Cloudtech’s support →

Wrapping up

AWS Availability Zones give SMBs a reliable way to keep their business running smoothly. They let businesses easily handle sudden traffic spikes without wasting resources and protect important data with built-in backups and redundancy.

With Cloudtech’s SMB-focused expertise, companies get the full benefits of AWS AZs without the complexity, gaining resilience, reliability, and peace of mind. Choose AWS and Cloudtech to build a cloud infrastructure that keeps the business always on, always ready, and always competitive.

With Cloudtech, SMBs can confidently build a cloud foundation that drives efficiency, resilience, and growth. Get started with Cloudtech today!

FAQs

1. Can SMBs mix AZs from different regions for extra protection?

Yes, workloads can be distributed across AZs in multiple regions to protect against regional disruptions like natural disasters or major outages. This adds an extra layer of business continuity.

2. How do AZs support hybrid cloud strategies?

AZs can integrate with on-premises or other cloud environments using VPNs or AWS Direct Connect, allowing SMBs to build hybrid architectures that combine local control with cloud scalability.

3. Do AZs improve compliance readiness for SMBs?

Using multiple AZs helps meet data residency and redundancy requirements, making it easier for SMBs in regulated industries to comply with standards like HIPAA, GDPR, or FINRA.

4. How do AZs help optimize costs while maintaining reliability?